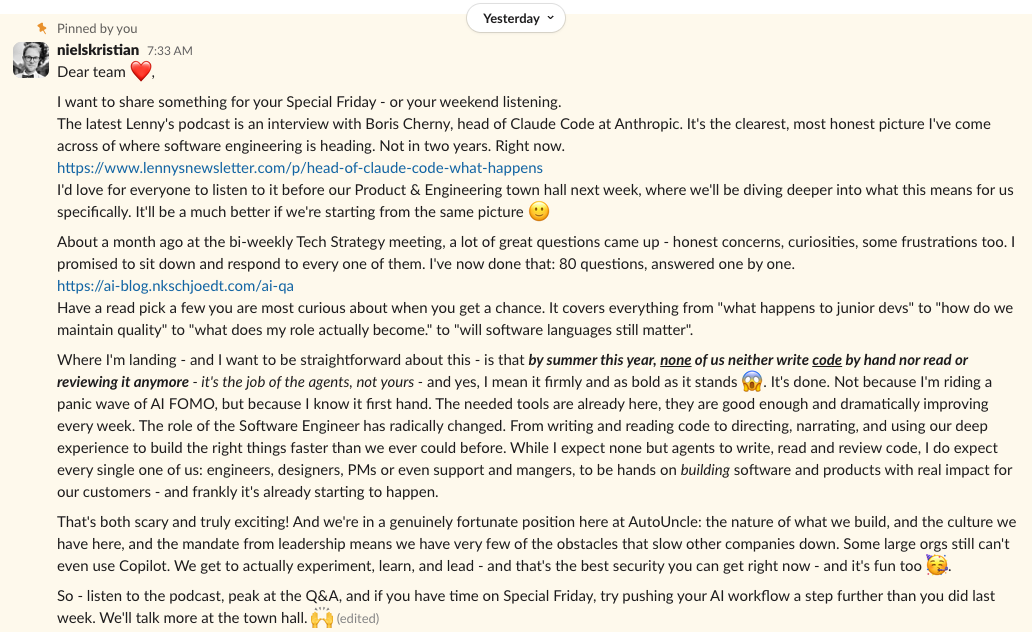

The Message

Yesterday I shared a message with my engineering team that I'd been building towards for a while. The core of it was this: by summer this year, none of us will write code by hand, and none of us will read or review it either. That's the job of the agents now. I meant it firmly and as bold as it stands.

The response was what you'd expect when you say something like that to a room full of experienced engineers. Some excitement. Some curiosity. And, underneath, a kind of quiet unease that I've been seeing more and more these days — especially from the most senior people in the room.

That unease is what this post is about.

The Paradox

Here's the thing I keep coming back to: the senior developers, the tech leads, the architects — the ones with the deepest understanding of how software is built — are often both the people who shine the most with AI and the ones who struggle the most emotionally. Those who have embraced it are producing extraordinary work. Those who haven't are caught in something that feels a lot like grief.

This post is about that struggle. Not to lecture anyone, and not to push the reluctant. Just to say: I see what's happening, and I think we need to talk about it honestly.

The Banner Holders

If you're a senior developer or a tech lead, chances are that a big part of your identity — your professional self-worth — is tied to being the person who upholds standards. You're the one who pushed for small, reviewable pull requests. You taught juniors to write clean, readable code. You insisted on proper separation of concerns, thoughtful commit messages, and disciplined sprint planning. You were the banner holder for quality.

That role mattered enormously. It was part of the job description, whether it was written down or not. Seniors were the guardians of the craft. You carried the collective wisdom — the things we told each other over and over until they became second nature — and you made sure the team lived up to them.

Here's the hard part: many of those things you've been holding the banner for are becoming irrelevant. Not because they were wrong. Because the context they existed in has changed beyond recognition.

The Context Changed — You Didn't Fail

I think this is the single most important thing to understand, and it's the thing I see the most senior people get stuck on:

Think about it. We told each other to write small pull requests. We told each other to break code into reviewable chapters. We told each other that clean, readable code was paramount. We told each other to pick elegant languages and maintain tidy dependency trees. These weren't arbitrary opinions — they were hard-won responses to real constraints. Humans needed to read each other's code. Humans needed to review it. Humans needed to understand it quickly, with limited working memory and limited time.

AI agents don't share those constraints. They can comprehend hundreds of files in a single context window. They don't need code broken into chapters — in fact, chunking things up can hurt their comprehension by fragmenting the context. They don't care if your code is in Rust or Ruby. They don't get tired. They don't lose focus after lunch.

So when the rules shift, it's not that you were doing it wrong all along. It's that the audience changed. You were optimising for human readers. Now the primary reader is a machine.

What's Actually Shifting

Let me get concrete. Here are some of the paradigms that are fading — and what's replacing them. For each one, the old way was right in its time. The new way is right in ours.

Small commits existed because humans needed chapters. A reviewer who didn't write the code needed a story — first this, then this, then that — to follow along. AI agents don't need that story. They read the whole thing at once, and actually prefer it that way. Breaking a change into tiny PRs fragments the context and makes the AI's job harder, not easier. It's effort spent on a purpose that's no longer there. (If you want to go deeper on why PR size isn't actually what determines risk — and what does — Shifting Gears has the detailed argument.)

This one isn't about removing human review — it's about moving it to where it actually matters. Instead of spending mental energy reading through diffs and nit-picking variable names, humans should be investing their review capacity upstream: Does this solve the user's problem? Is this the right domain model? Is this the architecture we believe in? The implementation — making it pass specs, deploy, and perform — is becoming the easy part. And even when mistakes happen, AI can find context from monitoring tools in minutes and fix issues faster than any human reviewer ever could. We finally get to deploy our brainpower where we always said it should go: close to the user.

I know this will be a controversial one. The idea that clean, maintainable, readable code is important is deeply ingrained — and for good reason. But the point is: readability is a trait of the human brain, not the AI. Agents don't care if they're reading Rust or Ruby. They don't complain about formatting. They comprehend whatever you throw at them. So the whole notion of some languages being "prettier" or more "readable" than others is becoming a human preference, not a technical requirement. What matters now is whether the code is efficient for AI to work with.

This is why so many teams are moving towards monorepos — backend, frontend, even native apps in one repository. For a human, that would be overwhelming. For an AI agent, it's a gift. It can grasp how an API endpoint connects to the frontend, what the mobile app expects, how GraphQL ties it all together — in one context window. The fragmentation we built to manage human cognitive limits becomes friction when the reader is a machine.

We planned in sprints because writing code was expensive. Human salaries, limited capacity, high coordination costs — we wanted to be absolutely sure we were building the right thing before committing resources to a two- or four-week cycle. When implementation becomes fast and cheap, those rigid containers lose their reason for being. The emphasis shifts from "plan the sprint carefully" to "keep your priorities right and stay fluid." Direction over schedules.

The MVP isn't dead — its purpose is exactly right: get into the market fast, test for real fit, and collect feedback that actually sticks. What's changing is the deployment strategy. When building becomes 5x faster, "minimum" can be far more ambitious than it used to be. You can validate bolder ideas without the old trade-off of cutting everything to the bone. But — and this matters — that doesn't mean you should over-engineer things. Over-engineering directly conflicts with the purpose of an MVP: speed to learning. The anchor stays the same: does it serve the purpose? I explore this idea further in The Price of Software Just Hit Zero — what happens when anyone can build anything. And if you're building products that include AI in the solution itself, design for where models will be in six months, not where they are today — or your product will be outdated before it ships.

Senior developers spent years being protective about dependencies — every library added was a liability, another thing to maintain, another potential breaking change. That instinct came from a world where updating a library that touched hundreds of files was a serious project. With AI, it's a trivial task. Dependency updates can be almost fully automated. The real question has flipped: do you even need the external library, or can AI just build the thing itself? Custom software for custom needs — that's the new default.

The Guilt

Now I want to talk about something that doesn't come up enough: guilt.

I've met many developers — experienced, talented people — who describe their AI experiences with shame. They'll say things like: "The AI added a library and I didn't spot it." Or: "I let through a gigantic pull request because the AI made it all in one go and the changes were related." Or: "It mixed concerns in one PR that should have been separate." They say these things the way you'd confess to a mistake. Quietly. Apologetically.

And that's the crux of it. When they work with AI, they can feel the work naturally pulling them towards a different process — larger changes, less fragmentation, less ceremony. It feels wrong because it violates everything they've been teaching and reinforcing for twenty years. But the old rules are what's creating the friction, not the AI.

This is more than a productivity problem. It's a deeply personal one. If you've built your professional identity around upholding certain standards, and those standards suddenly become counterproductive, it can feel like your entire career is being called into question. If I have to say goodbye to these rules, does that mean I was wrong the whole time? Have I been wasting twenty years?

The answer is no. Emphatically no. Those rules were right. They worked. They were the best response to the constraints that existed at the time. You weren't wrong then. The ground has just shifted under your feet.

A New Code of Conduct

What I think we need — urgently — is to help each other build a new set of rules. A new code of conduct that fits the reality of AI-driven development. Not because the old one was bad, but because holding each other to standards that no longer apply is actively holding us back.

Right now, the developers who are advancing fastest with AI are the ones who've given themselves permission to let go. They've stopped policing themselves against old checklists and started leaning into what the AI does naturally. They're making bigger changes in single passes. They're reviewing plans instead of diffs. They're validating bolder ideas instead of cutting everything to the bone.

But many others are still stuck — not because they lack skill, but because they lack permission. They need to hear from their peers, from their leads, from our community, that it's okay. That the old rules served us well and we can honour them by moving on, not by clinging to them.

I wrote about what happened when we actually tried this — giving a team explicit permission to let go — in The Fire Ceremony. It involves a campfire, a ritual, and an app I built 30 minutes before going live.

Fewer Errors, Not Zero

One last thing. There's a misconception that if a computer does something, it should be flawless. Zero tolerance. We don't extend that expectation to human developers — everyone ships bugs, everyone makes mistakes — but somehow we expect AI to be perfect.

It won't be. There will always be a translation layer between human intent and machine output. Sometimes the AI will fill gaps in context incorrectly. Sometimes it will guess wrong or take a direction you didn't discuss. That's the nature of the interface between two kinds of intelligence.

But here's what matters: the overall error rate drops. The number of bugs that reach production when working with AI is, in my experience, significantly lower than when it was just humans. The goal was never perfection — it was always about building the best thing we could with the tools we had. Now we have better tools. Fewer errors, not zero errors, is the real benchmark. Just as it always was.

This Isn't Loss — It's Liberation

For years, we told ourselves we should move closer to the user. We should think more about the problem we're solving and less about the syntax we're writing. We should spend our energy on strategy, on understanding, on creating the right thing — not just the thing right.

We were right about all of that too. We just couldn't do it, because so much of our energy was consumed by the code itself. Reading it, writing it, reviewing it, refactoring it, arguing about it.

Now that burden is lifting. And what's left is the work we always knew mattered most: understanding the user, thinking about the problem, making the right calls about what to build and why. The feedback loops that drive great knowledge work — the sparring, the challenge, the friction between ideas — are being democratized by AI in ways that change not just how we work, but where we need to be to do it well. That's where your twenty years of experience becomes more valuable than ever — not less.

So if you're a senior developer feeling the weight of this change, I want to say it clearly: it wasn't wrong. None of it was wrong. You built your career on principles that were exactly right for their time. And the very skills that made you a banner holder — the discipline, the standards, the deep care for quality — are exactly what's needed now to help write the new rules. Swedish register data already shows that young workers are being displaced first while experienced workers become more valuable — your accumulated judgement is the thing AI can't replicate.

The map looks different. But your experience is still the compass.

If you're ready to start building new habits, Shifting Gears has the practical dos and don'ts I've learned along the way. For the deeper playbook — principles, tactics, and daily workflows — there's the AI Cookbook. And if you're wondering how to enforce quality in this new world without relying on the old ceremonies, Don't Just Tell It. Enforce It. is about building automated guardrails that work better than rules ever did.